Data Portal Overview

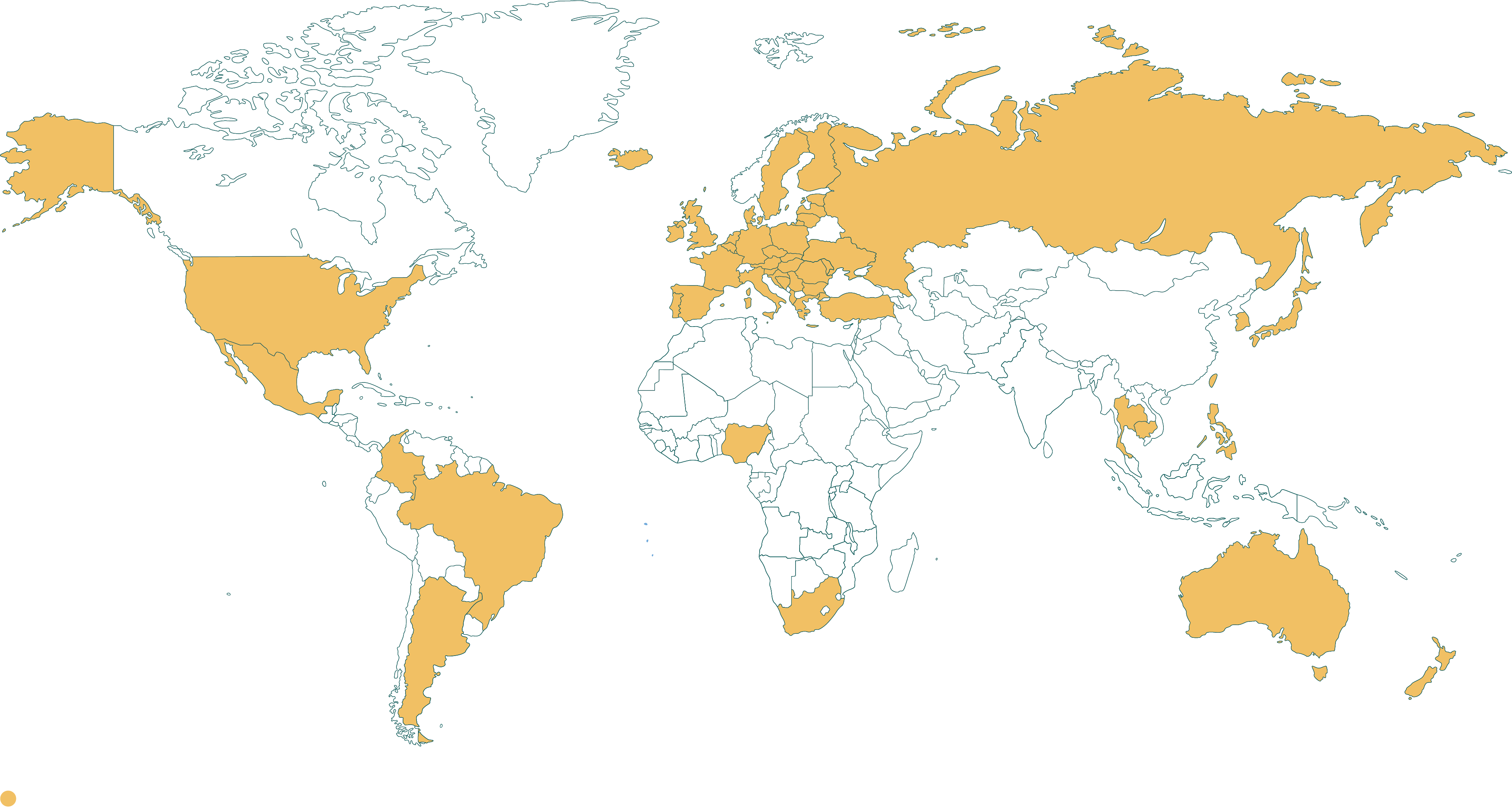

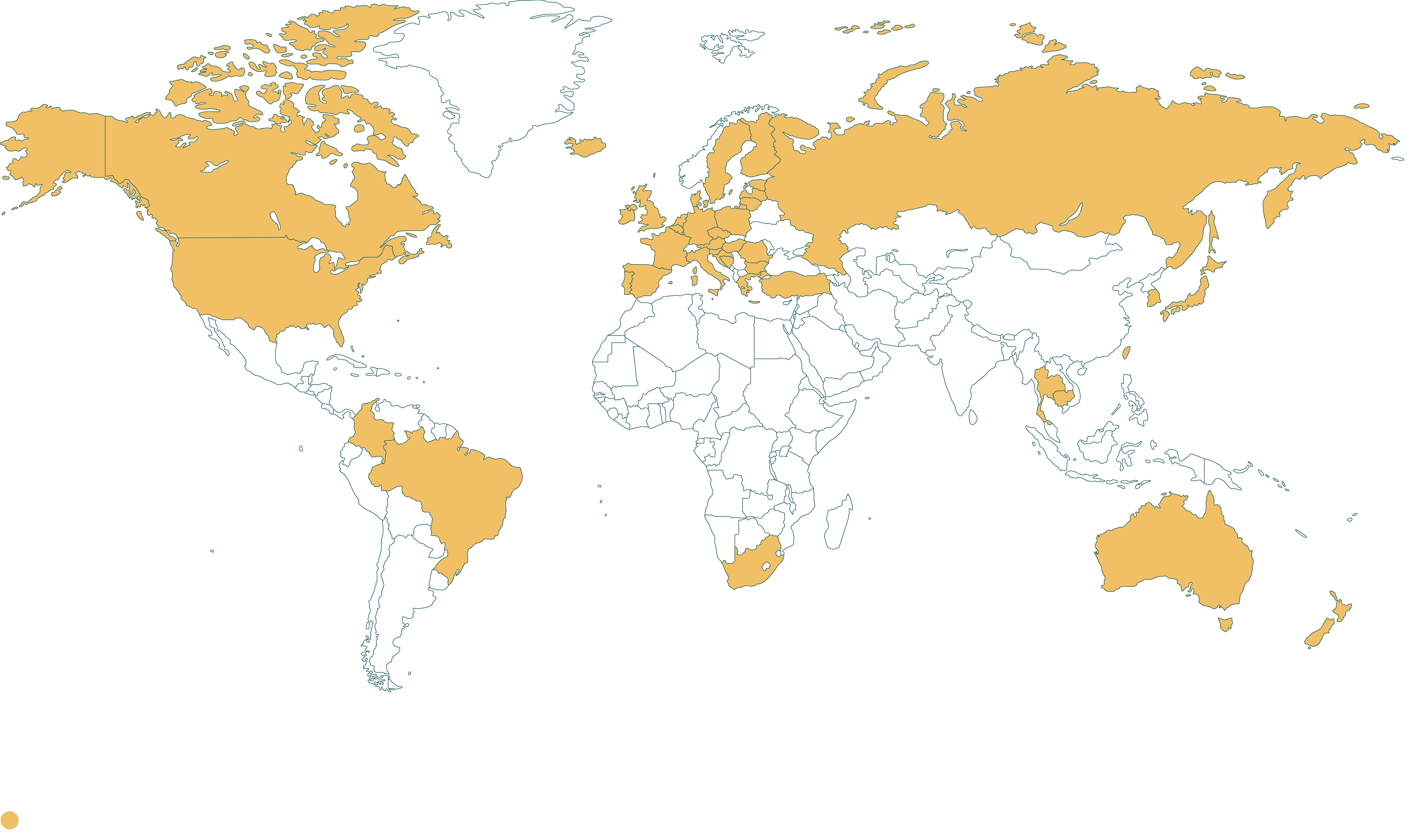

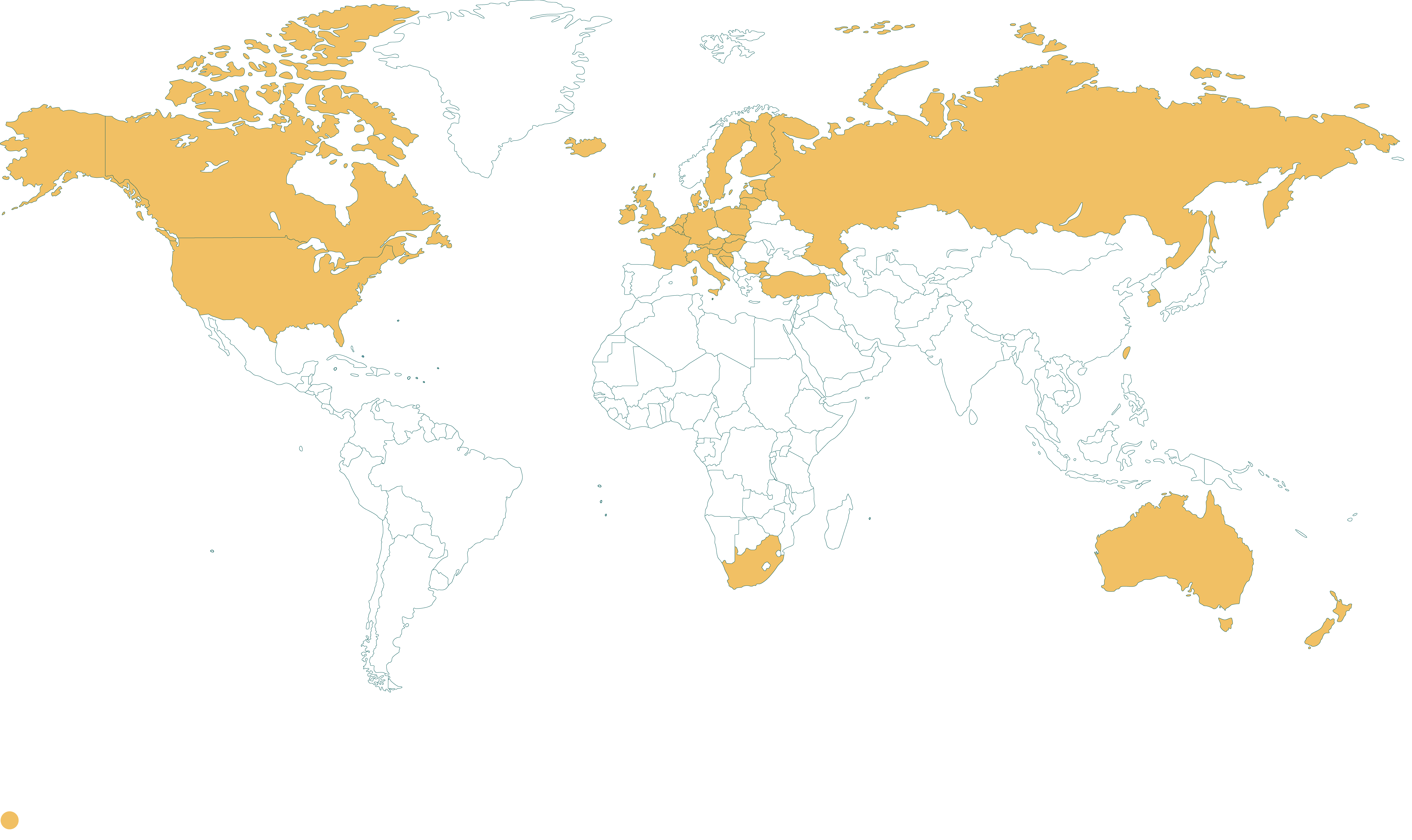

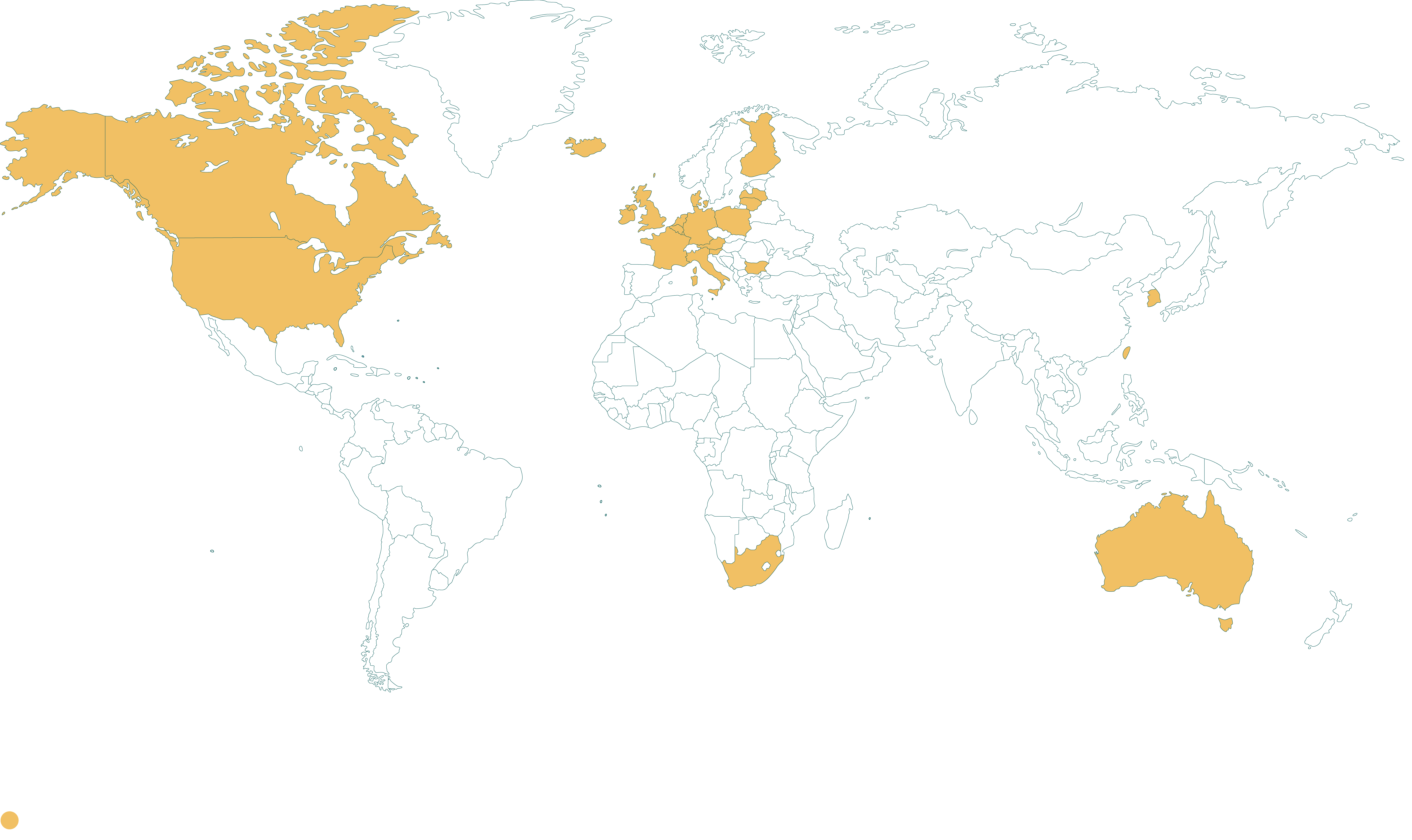

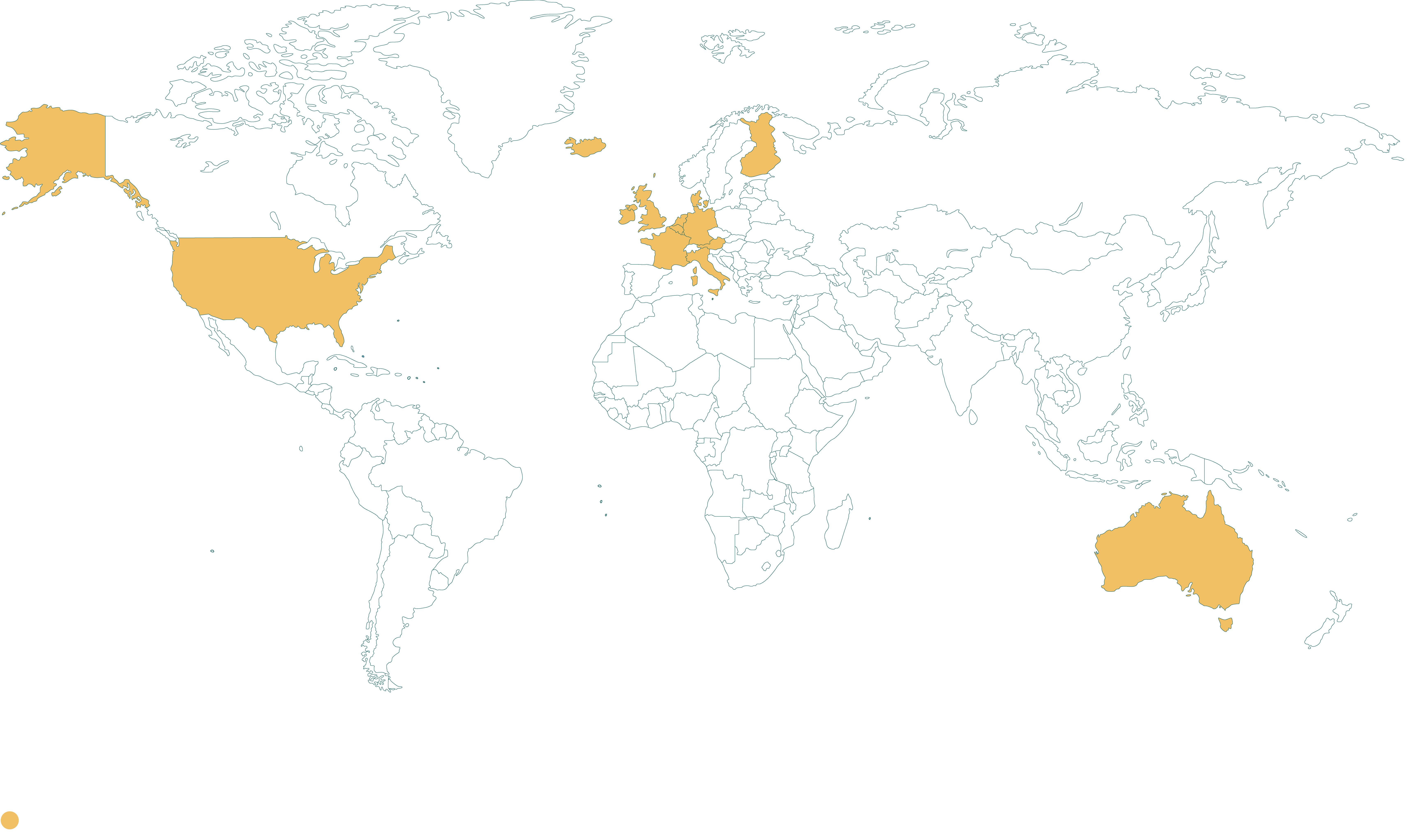

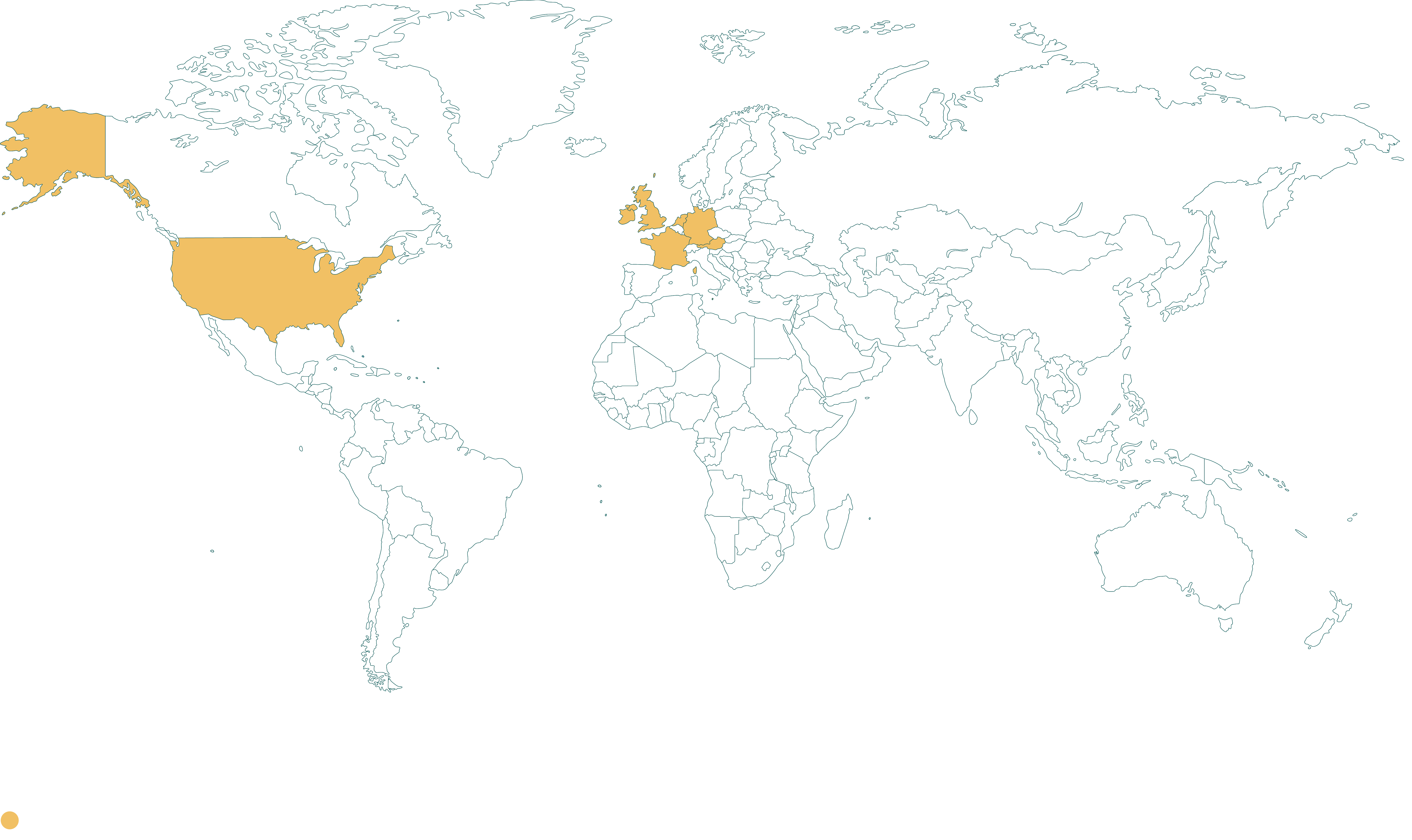

The INHOPE Data Portal dashboard is a project deliverable that serves as a public-facing platform to present key performance indicators (KPIs) and metrics related to the INHOPE network and the ICCAM platform. This interactive dashboard, built using Power BI and published on the INHOPE website, aims to provide easily understandable information to the public.

The dashboard (funded by the European Union) showcases key indicators and metrics related to the INHOPE network and the ICCAM platform starting from the year 2020, divided by quarters and by year. It features nine carefully selected indicators that align with INHOPE’s annual report. These indicators offer valuable insights into the organisation's progress and performance.

Access the dashboard below.

Terminology

- The number of confirmed records of CSAM allows to track the INHOPE’s network success in identifying illegal content.

- The number of suspected records of CSAM represents the quantity of potential illegal content reported. A higher number indicates the effectiveness of the INHOPE hotlines network in encouraging people and analysts to report potentially illegal activities.

- The action step indicator, represented as a percentage of the total content reported to law enforcement.

- To provide further context, triangle indicators are placed next to these four metrics. They display an upward or downward trend in comparison to previous quarters or years, based on the selection made in the time slicer.